11 minutes

Yet another 25G home router build

I recently moved out of my shared student flat – where internet was centrally provided – and into my own flat. Finally I get to choose my internet setup! The obvious choice is init7’s Fiber7 offering: unthrottled, symmetric, open peering, a static /48 IPv6, peer-to-peer (no PON), no fuss. An internet speed limited only by hardware, not by economics trying to carve out pricing levels for every pocket (100 Mbit/s on fiber, seriously?).

<rant>

Fiber7 costs 65 CHF/month, which is a medium market price in Switzerland. 10G and 25G have the same monthly price, only the setup fee differs, because the hardware cost differs. 65 CHF is not too expensive (there are more expensive offerings), but also not the cheapest (there are cheaper offerings, but at worse service quality).

Especially when you look beyond the Swiss border and into Germany, and you see Deutsche Telekom offering Magenta 2000: 2G down, 1G up for 140 €/month. Twice the price for 1/10th of the speed.

What is more, this speed smells a lot like GPON. But on the announcement Telekom says that they have XGSPON equipment on their end and that you need a XGSPON-capable router. But XGSPON can carry symmetric 10G! So is Telekom throttling XGSPON to GPON? Maybe. Ein Schelm, wer Böses denkt. Telekom is clearly leaving itself room to give customers an artifical speed upgrade in the future (or even to sell the upgrade at a premium).

</rant>

All of this is to say: I am very happy init7 exists and provides this great service. Now I just need to get a router.

A number of other people have also written about their home router builds for their init7 connection:

Check them out! I got inspired by their blog posts, too. This post is just yet another home router build.

A small note on hardware before we start: There are two form factors for network cables. RJ45 (the classic) and SFP (and its variants). At 10G, people tend to use both SFP+ (data center) and RJ45 (home lab) connectors. At 25G, however, SFP28 is the go-to option (but Cat8 would work, too). Wikipedia has a full table showing all the different SFP types. Here is an extract:

| Port | Speed |

|---|---|

| SFP | 1G |

| SFP+ | 10G |

| SFP28 | 25G |

Initial reconnaissance

My original plan was to simply go for 10G and buy an off-the-shelf appliance as a router: the DEC740 from Deciso, the makers of OPNsense, the router and firewall I wanted to use. It is fanless, quiet, and has a low power consumption of ~15W, and costs 750 €. The simple router boxes other ISPs give their customers are also in the 10-15W range.

But I needed to buy a network card anyway to connect my existing NAS to the router. So I started shopping. Initially, I was searching for 10G cards. Most 10G cards cost around 200–250 CHF. SFP28 cards tend to cost 300–350 CHF. (As of December 2023, YMMV.)

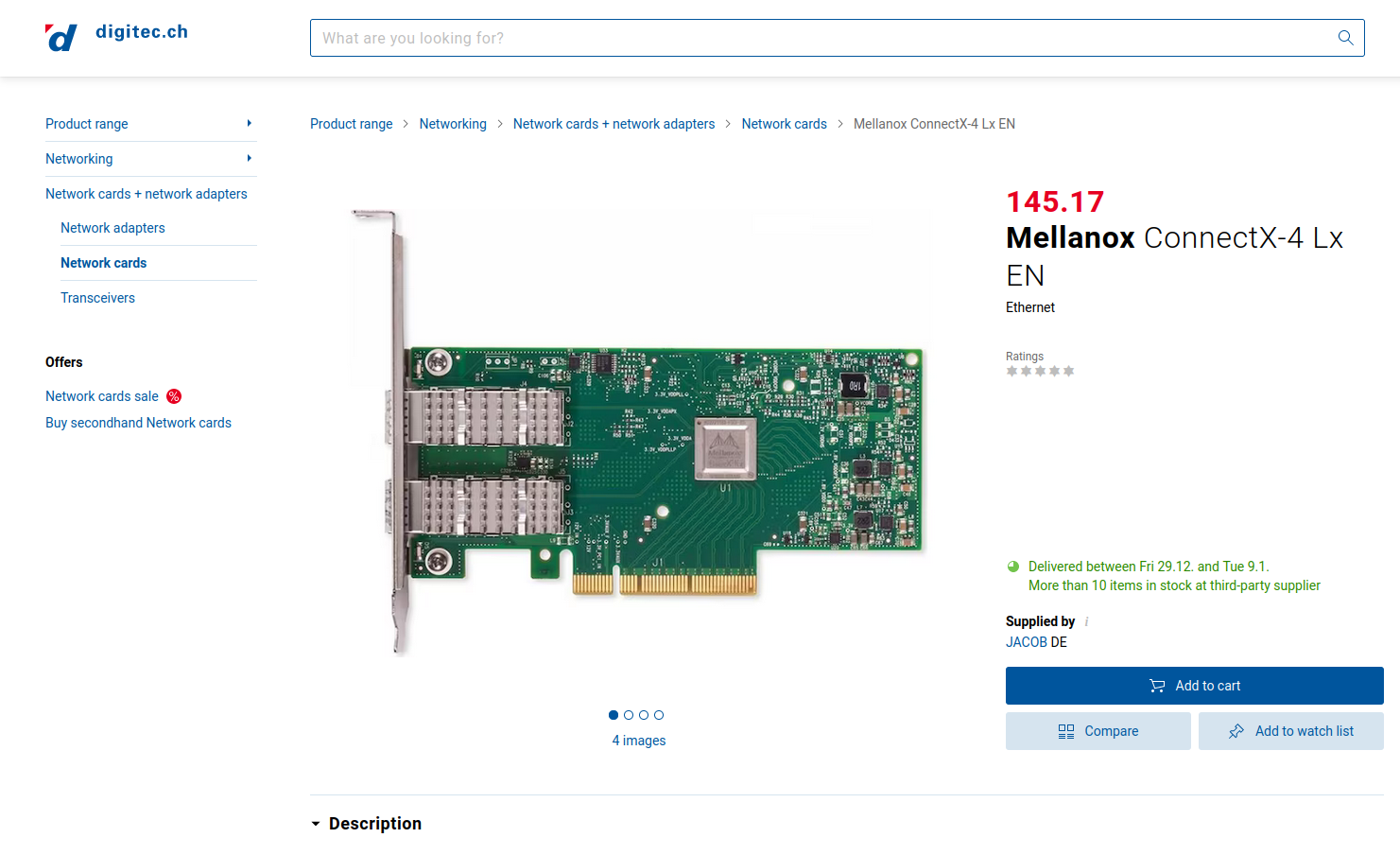

Eventually, I came across the Mellanox ConnectX-4 Lx on Digitec:

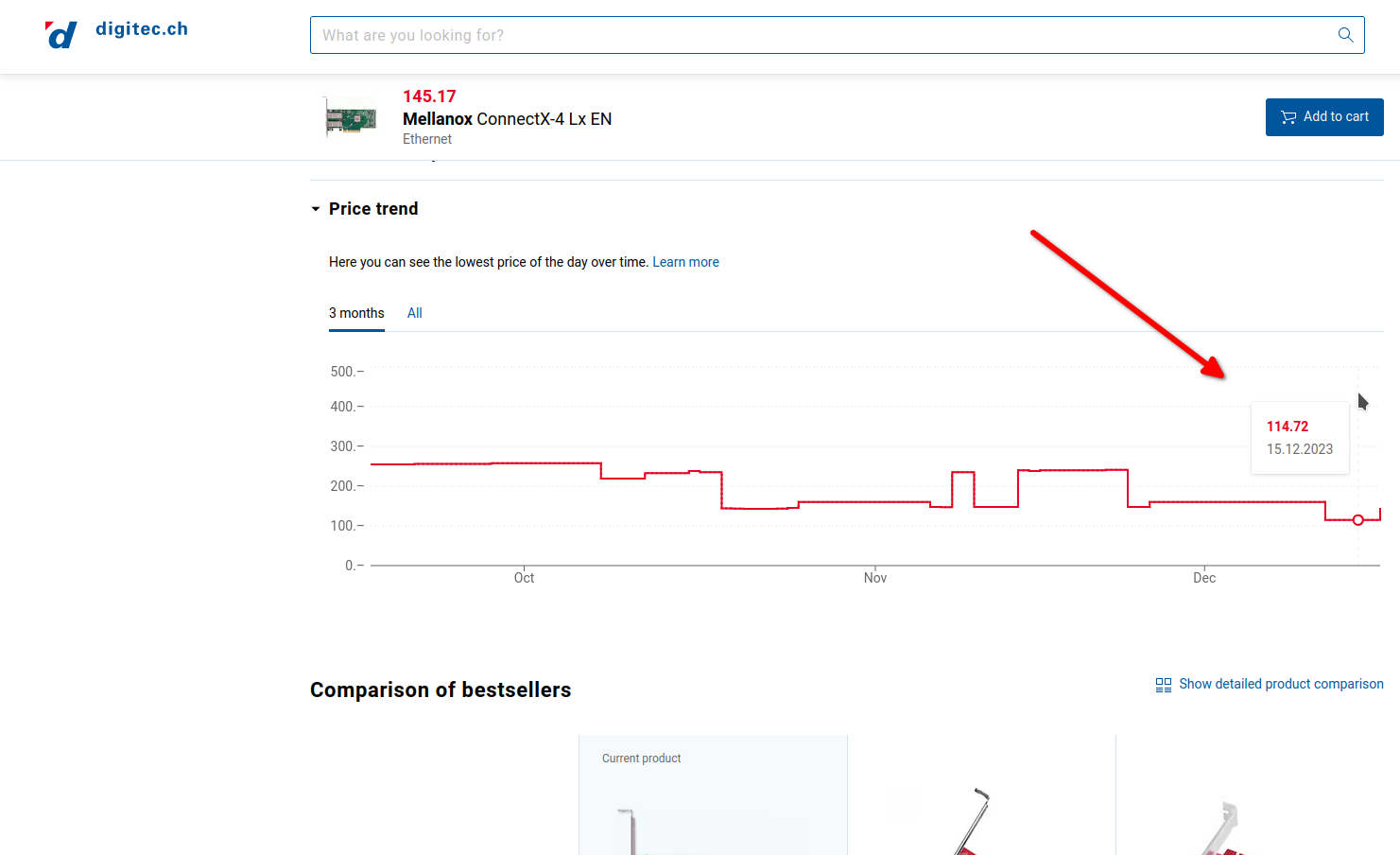

Or rather, I took this screenshot too late. I actually came across it a few days earlier when it was available not for 145 CHF but for 115 CHF! (This likely was some temporary deal from supplier jacob.de.)

The Mellanox ConnectX-4 Lx has two SFP28 ports, not SFP+ ports. I was hooked. If this 25G card is cheaper than the 10G cards (115 < 200), then let’s buy this one! And if my NAS has a 25G networking card, it would be a shame if my router only had 10G, right? Sure, SFP28 is backwards compatible with SFP+, but still.

Thus, I gave up on my “buy an off-the-shelf appliance and be done with it” plans

and started researching a custom router build.

Spoiler: at the end, it was way more expensive than the DEC740.

But I learnt a lot about the hardware, and it was enormous fun doing the shopping research for parts and finally the assembly!

Settling on a NUC

My first idea was to build a tower-sized computer. Michael Stapelberg’s blog post drew my attention to the AMD Ryzen 7 Pro 5750GE, with only 35W TDP! Unfortunately, it was out-of-stock wherever I looked.

Later, Scott Diers’s build with the Intel NUC 9 Pro inspired me to take a look at NUCs instead of a full tower. I liked the smaller form factor. Before reading Scott’s post, I simply wasn’t aware that there exist NUCs that have PCIe slots (and you need a PCIe slot for the network card).

The reason that these NUCs have a PCIe slot is that they are designed for gamers. We can (ab)use that: instead of putting in a graphics card, we can put in a networking card!

Sadly, the “Pro” series that Scott used does not come with a PCIe slot anymore (for the 11, 12, 13 versions). Therefore, I had to go for the more expensive “Extreme” series. The Pros cost around 500 CHF while the Extremes cost around 1000 CHF…

So let’s compare the two most relevant NUC Extremes. The i9 variant of the NUC 12 Extreme and the NUC 13 Extreme compare as follows (an i7 variant also exists):

| NUC 12 Extreme | NUC 13 Extreme | |

|---|---|---|

| Launch | February 2022 | November 2022 |

| CPU | 12th Gen i9-12900 | 13th Gen i9-13900K |

| Core count | 16 cores (8P+8E), 24 threads | 24 cores (8P+16E), 32 threads |

| TDP | 65 W | 125 W |

In all other regards (that are relevant to me) they are similar: 1 PCIe x16 Gen5 slot, 2 Thunderbolt 4 ports, lots of USB-A ports, up to 64 GB RAM (though 12 is DDR4, 13 is DDR5), 3 M.2 slots, two RJ45 ports (2.5 Gbit/s and 10 Gbit/s).

The only difference between the i9 variant and the i7 variant (other than the CPU) is the 10 Gbit/s port, which is only present on the i9 variant. I chose the i9 variant because I wanted the additional RJ45 port.

In the end, I chose the NUC 12 Extreme simply because the NUC 13 Extreme was not available in a reasonable timeframe from any online store. Note that the TDP is lower, but the TDP doesn’t say much about the idle power consumption.

Having a 16-core home router is a waste. Let’s use it for other computing tasks as well! I decided to not build a router anymore, but instead build a server that happens to be a router. In practice, this means that the bare metal is running Proxmox as a hypervisor, and one of the VMs is running OPNsense as the router and firewall.

In total the server will feature: 16 cores, 64 GB RAM, 8 TB SSDs (but as RAID-1, so only 4 TB usable). A compute-heavy complement to my existing storage-heavy NAS (which I built a few years ago).

(Granted, it is still a desktop CPU not a server CPU. It has “only” 8 performance cores, the other 8 are energy-efficient cores. An Intel Xeon or AMD Epyc CPU is a very different beast.)

The final build

Fast forward, I ended up with the following parts for the router server:

| Part | Prize |

|---|---|

| Intel NUC 12 Extreme Kit | 963 CHF |

| 2x Samsung 990 Pro SSD 4TB | 2x (265 € ~ 250 CHF) |

| Corsair Vengeance RAM 2x 32 GB | 117 CHF |

| Mellanox ConnectX-4 Lx | 115 CHF |

| Σ | 1695 CHF |

To connect the router to the optical termination outlet (OTO) of my flat, and to upgrade my NAS to 25G and connect it to the router, I also bought the following parts:

| Part | Prize |

|---|---|

| LC UPC to LC APC, simplex, single mode, 2m patch cable | 3.30 € ~ 3 CHF |

| SFP28 optical transceiver | 106 € ~ 99 CHF |

| SFP28 DAC Cable, 2m | 37 € ~ 35 CHF |

| Mellanox ConnectX-4 Lx | 115 CHF |

| Σ | 252 CHF |

I bought all of the above parts on digitec.ch, amazon.de, and fs.com.

You can also get the fiber patch cable and the transceiver directly from init7, for 77 CHF (10G) or for 222 CHF (25G) (because SFP28 transceivers are most expensive). With a referral code, you get 111 CHF off the hardware.

If you (like me) buy the parts yourself, you need to do your research to make sure you buy the right cable and transceiver. The wall outlets (OTO) in Switzerland are generally LC APC. SFP transceivers are almost always UPC (aka. PC), but can be either SC or LC. APC is green, UPC is blue. fs.com’s blog is a great resource to learn about the various types of cables and connectors. For the transceiver, follow the specs provided by init7 here and here. People seem to buy fiber equipment either on fs.com or on flexoptics.net.

Assembling it

Here are a few photos from assembling the server.

The inside: You can see the two SSDs on the left, the big silver heat sink that hides the CPU behind it, and the two pieces of RAM on the right. The box on the very right (facing the front of the NUC) is the power supply. Lying at the front, you can see the box with the fan that goes on top of the CPU/SSD/RAM. The two grey bars will go on top of the SSDs, meaning that you don’t need dedicated heatsinks for the SSDs (also, they wouldn’t fit).

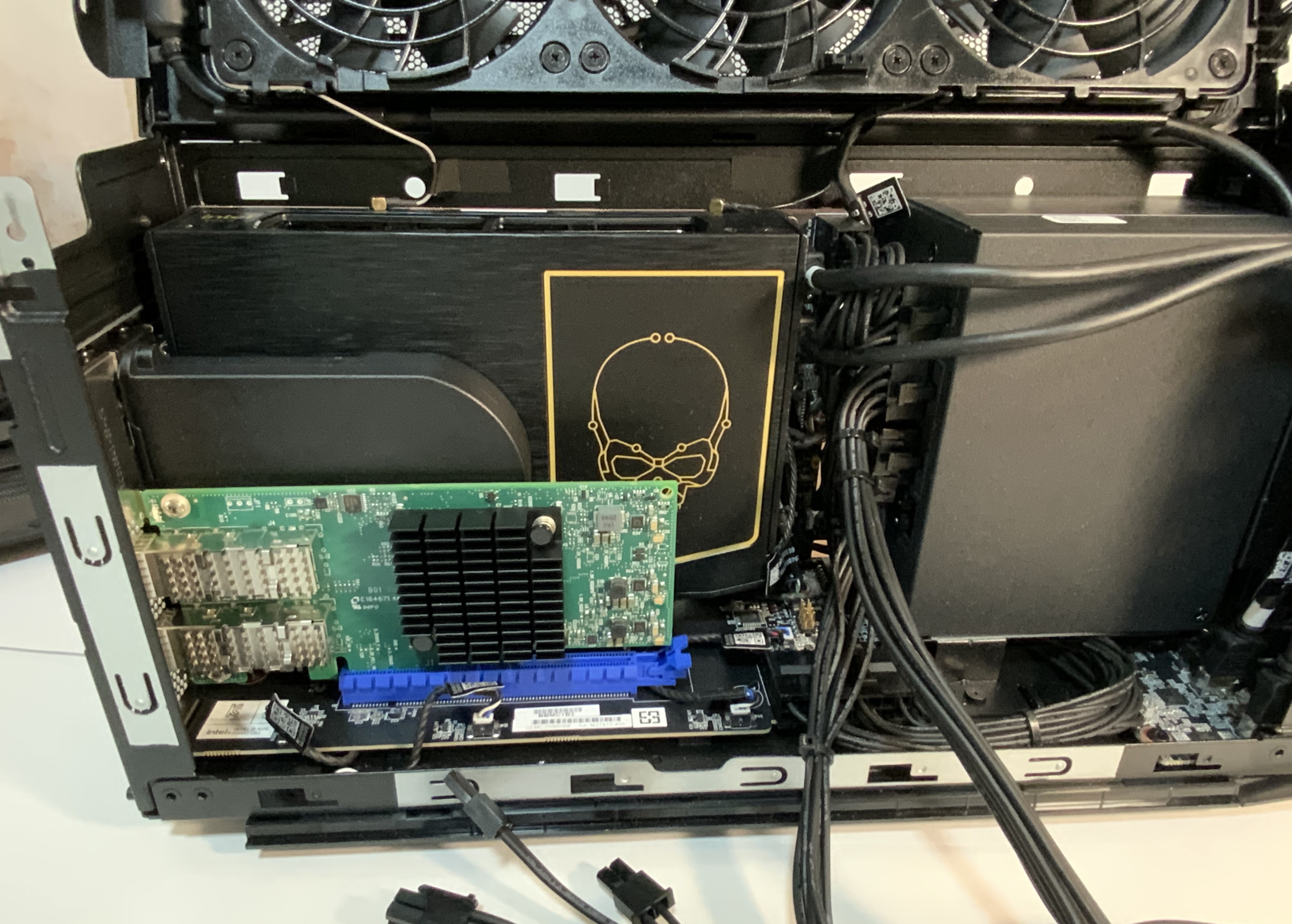

Installing the Mellanox card in the blue PCIe slot:

The view of the back: The top RJ45 port is 10 Gbit/s, the bottom one is 2.5 Gbit/s. On the right, the two SFP28 ports of the Mellanox card. Matchstick for scale.

Installing it

This is what the NUC looks like with everything installed:

And the back: The yellow fiber cable goes into the wall and out to init7. The black Direct Attach Cable (DAC) below goes into my NAS. The blue copper cables go into switches.

Because the NUC Extreme is designed for gamers, it has LEDs (of course it does):

Luckily, there is a physical button on the bottom of the NUC allowing you to turn the LEDs on and off. No need to install any drivers.

As you can see e.g. on its Digitec page, the front of the NUC usually features a skull. Weird taste, probably reaching only a subset of gamers, and an even smaller subset of the general population, but okay. Luckily, the skull is just a small semi-transparent mask. You can unscrew the front of the panel and take the skull mask out, leaving you with the full, square LED front (as seen in my picture). (I learnt this from this review of the Intel NUC 11 Extreme.) Optionally, you can cut your own thin sheet to mask the light to another shape. I haven’t tried this yet since I leave the LEDs off anyway.

Speedtest

So does it work? Does it make the internet go swoosh?

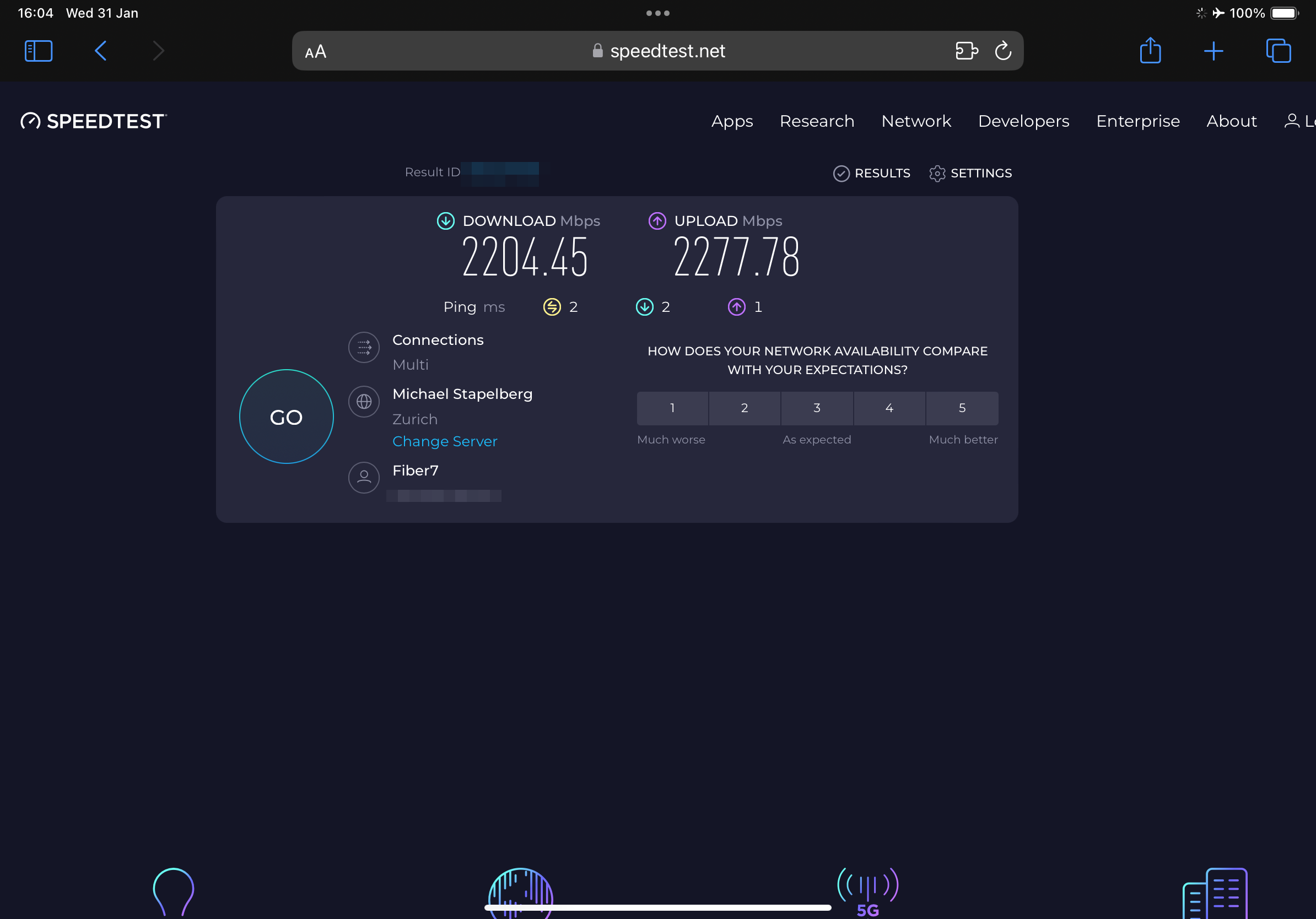

First, an iPad Pro, with a 2.5 Gbit/s USB-C to RJ45 adapter:

(Note that unlike Android, iPadOS doesn’t have a dedicated status icon in the top right for Ethernet (like for WiFi).)

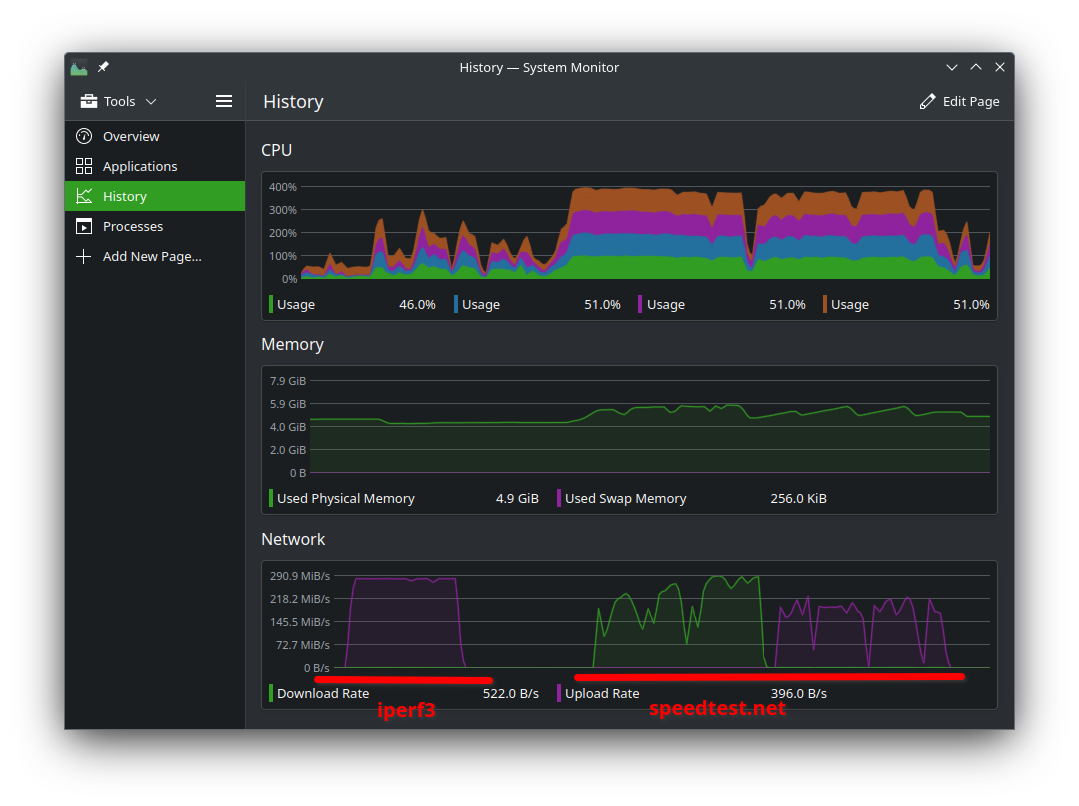

Next, the system monitor on my laptop when connected with the 2.5 Gbit/s adapter (290 MiB/s = 2430 Mbit/s):

The first half shows an iperf3 test to speedtest.init7.net. The second half shows a speedtest.net test in Firefox. Clearly my CPU (a 2 core, 4 threads i5 7th gen) is the bottleneck. Speedtest.net, Firefox, the Snap sandbox, the network stack, or something else somewhere, makes the CPU go more spinny than iperf3 in a terminal does. Either I can buy a new latptop, or someone can fix the software.

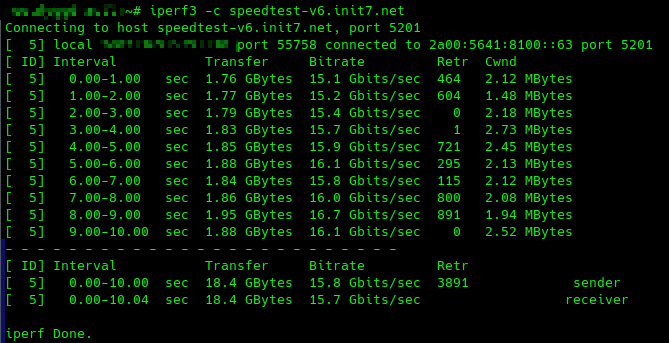

Apropos iperf3 in a terminal. Here is iperf3 running on the shiny new router:

A mere 15–16 Gbit/s. Far from the 25 Gbit/s we were hoping for. But at least more than 10 Gbit/s, so not a complete waste, I guess?

Doing an iperf3 between the new router and the old NAS, both with their SFP28 cards and the SFP28 DAC cable, I also only get ~15 Gbit/s. So the bottleneck is not init7, but somewhere on my setup. I just need to find the time to track it down. Using PCI passthrough for the NIC (since OPNsense is running in a VM) already improved the performance by ~1 Gbit/s compared to using Proxmox’ virtual bridge.

Conclusion

In this post I described my journey to building a home router. Compute-wise, the new server is more powerful than originally envisioned. That’s fine, I will use it to run VMs, e.g. a remote desktop to extend the lifetime of my old laptop. Speed-wise, the router is practically capable of 15 Gbit/s, and theoretically capable of 25 Gbit/s (once I managed to tune it).

Do I need 15 Gbit/s? No. Working, reliable 2.5 Gbit/s would be nice though.

In practice, the download performance for many things is still at most 1 Gbit/s, and 1.5 Gbit/s if you are lucky. I briefly tested this by downloading various installation images (Debian, Ubuntu, LineageOS, GrapheneOS).

It is a chicken-and-egg problem. If no one has fast internet at home, no one will provide fast servers, and no one will optimise the software stacks and transport protocols to “just work”. By having the hardware for 25 Gbit/s, we have eliminated the first bottleneck. Next, we need to eliminate the software and configuration bottlenecks.

Hopefully, as more and more people have faster internet, more developers will optimise for it, and those speeds will become usable out-of-the-box. I am looking forward to the day when I can download the 10 GB folder of photos my friend shared with me from our holiday trip at 2.5 Gbit/s in 30 seconds, and not in 2 minutes at the currently realistic 0.7 Gbit/s.

And maybe even in 8 seconds at 10 Gbit/s, if I decide to spent way too much money on a Thunderbolt-to-SFP+ adapter for my laptop.

2291 Words

2024-02-03 19:00 +0000